In traditional classroom education the teacher can easily perceive or obtain the engagements of the students by observing them. Distance education is affected by the absence of such a feedback coming from expressions and behaviours of the students attending the lesson. Aiming at reducing the gap between these two learning modalities, the proposed system analyses student videos recorded by the cameras available on the laptops by which they are attending the lecture. This approach provides aggregated information on didactic efficacy to the teacher, avoiding the need of sharing student video recordings during the lecture. The teacher is therefore supported during the oral exposition of the lecture. The approach proposed in this study has been conceived as a software architecture running on background and locally on students’ personal computers. No sensitive data are shared over the network. It has been evaluated in two experimental sessions dedicated to a sensitivity preliminary evaluation of the proposed instrument and to the assessment of its didactic usefulness by volunteers, students and industrial employees. User evaluation reports, both on student and teacher side, a positive feedback. The discussion can bring to insights and new considerations about learning in general, which is nowadays so significantly forced to change due to COVID-19 pandemic and it could be intended to change even more in the direction of distance learning in the future

https://dl.acm.org/doi/abs/10.1016/j.future.2021.07.026

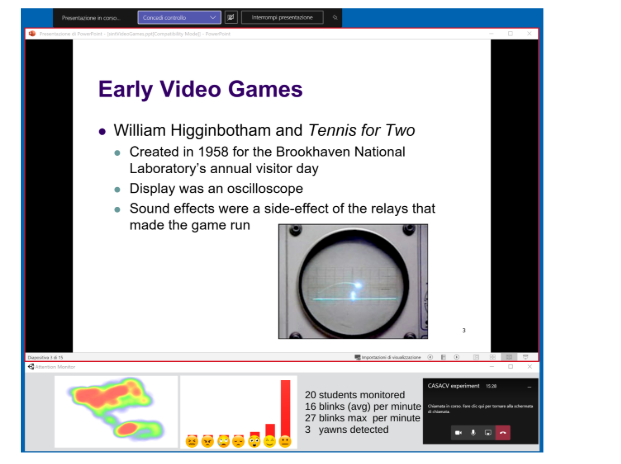

The gaze tracker matches the point projected on the screen with the eyes rotation frame-by-frame. It uses face landmarks detection, with a specific focus on eyes, and a calibration process. The results of this module are the generating of a heatmap of the student’s attention on the slide broadcast during 280 the lecture. The software module has been implemented on the GazeFlow application2 and generates heat-maps like those presented in Figure 5. The calibration procedure consists in a prior step to make the module running properly and reliably. The student is asked to sit in front of the monitor and to fol285 low a moving marker on the slides by the eyes. This process indirectly estimates the distance between the observer and the monitor so that a reliable estimation of the fixation point can be inferred from all possible rotations of the eyes. The module is also slightly invariant to the head pose and to limited trans290 lations of the head, which must be necessarily well framed in the camera stream.